// FIELD_ANALYSIS

The AI Operations Economy_

leftsideigating the high-consequence shift from "AI experiments" to "AI infrastructure."

// 01_THE_REALITY_GAP

Across regulated industries and the public sector, the innovation honeymoon is over. We are entering "Pilot Purgatory" a state where organizations have successful demos but zero production systems they trust with core revenue or citizen data.

The value has shifted from the "AI Demo Economy" to the AI Operations Economy. The winners will be the ones who treat AI not as a project, but as infrastructure.

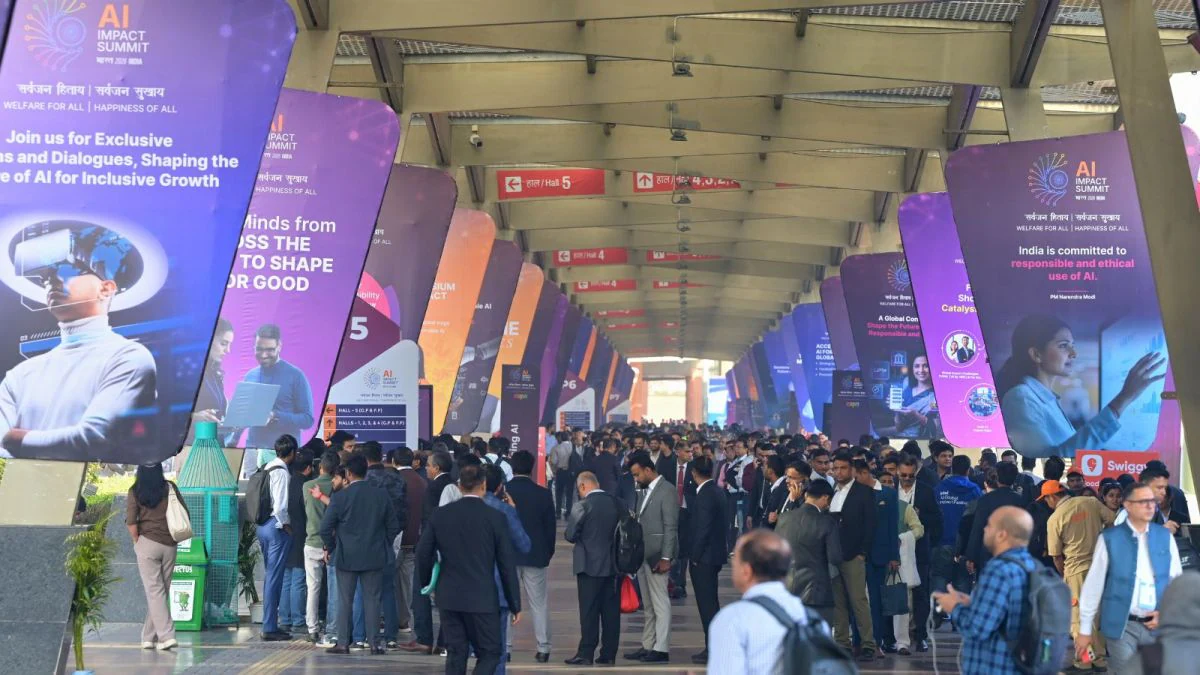

// FIELD_EVIDENCE: INDIA_AI_IMPACT_SUMMIT

// 02_THE_STRATEGIC_STACK

I. Workflow Integration

AI must move from a standalone tool to a core component of the enterprise stack. It must interact directly with ERPs and CRMs without human middleware.

II. Operational Accountability

Operations require clear ownership for monitoring, maintenance, and the "human-in-the-loop" protocols that ensure system integrity over time.

III. Decision Auditability

Systems must be architected for full traceability. Every decision must be explainable and compliant with evolving local data laws like the DPDP Act.

IV. Scalable Safety

We focus on hard guardrails that support the transition from a pilot to organization-wide use, preventing leakage and bias at the infrastructure level.

// 03_FIELD_LOGIC: SYSTEM_INTEGRATION_CASE

// SITUATION_REPORT

The primary bottleneck observed across the India AI Impact Summit was not a lack of compute or model accuracy, but a failure of Deterministic Auditability. Enterprises in BFSI and the public sector have successfully demonstrated "magic" in sandboxed pilots, yet remain paralyzed at the production gate. Current LLM-driven workflows lack a traceable path for critical decisions under the DPDP Act, "the AI suggested it" is not an acceptable response for a regulator or citizen.

// RESOLUTION_PROTOCOL [Allshore_ Standard]

Decision Orchestration Layer

Implementing a custom logic gate that logs synaptic weights against hard-coded policy rules, ensuring every output is paired with a deterministic "reasoning trace" that a human auditor can validate.

Embedded Core Integration

Moving AI from standalone "sidecar" applications into an embedded core component within existing enterprise stacks to eliminate human middleware and maintain domestic data residency.

Day 2 Accountability Metrics

Establishing monitoring regimes that trigger automated alerts on Model Drift, shifting the organization from a "launch and forget" mindset to one of active, governed maintenance.

// ALTERNATIVE_VECTORS [CTO_Field_Synthesis]

Synthetic Parallel Validation

Instead of a hard cutover, organizations run AI systems in "Shadow Mode" alongside legacy logic for 90 days. Every AI-driven decision is compared against a deterministic baseline to measure variance before any citizen-facing output is live, quantifying risk before exposure.

Federated Sovereignty Models

Moving toward a Federated Learning approach where models are trained locally across distributed nodes (e.g., bank branches). This ensures raw citizen data never leaves its original domestic node, satisfying residency requirements.

Human-in-the-Loop (HITL) Tiering

A tiered approach where low-consequence outputs are fully automated, while high-consequence decisions (claims denial, legal advice) are routed through a human-supervised Audit Gateway to maintain accountability without sacrificing speed.

The winners in the next phase of AI will not be those who experiment the most, but those who make AI dependable and governed.